Monitore seu cluster Kafka em execução no Kubernetes com o operador Strimzi, implantando o OpenTelemetry Collector. O coletor descobre automaticamente os pods do broker Kafka e coleta métricas abrangentes.

Arquitetura

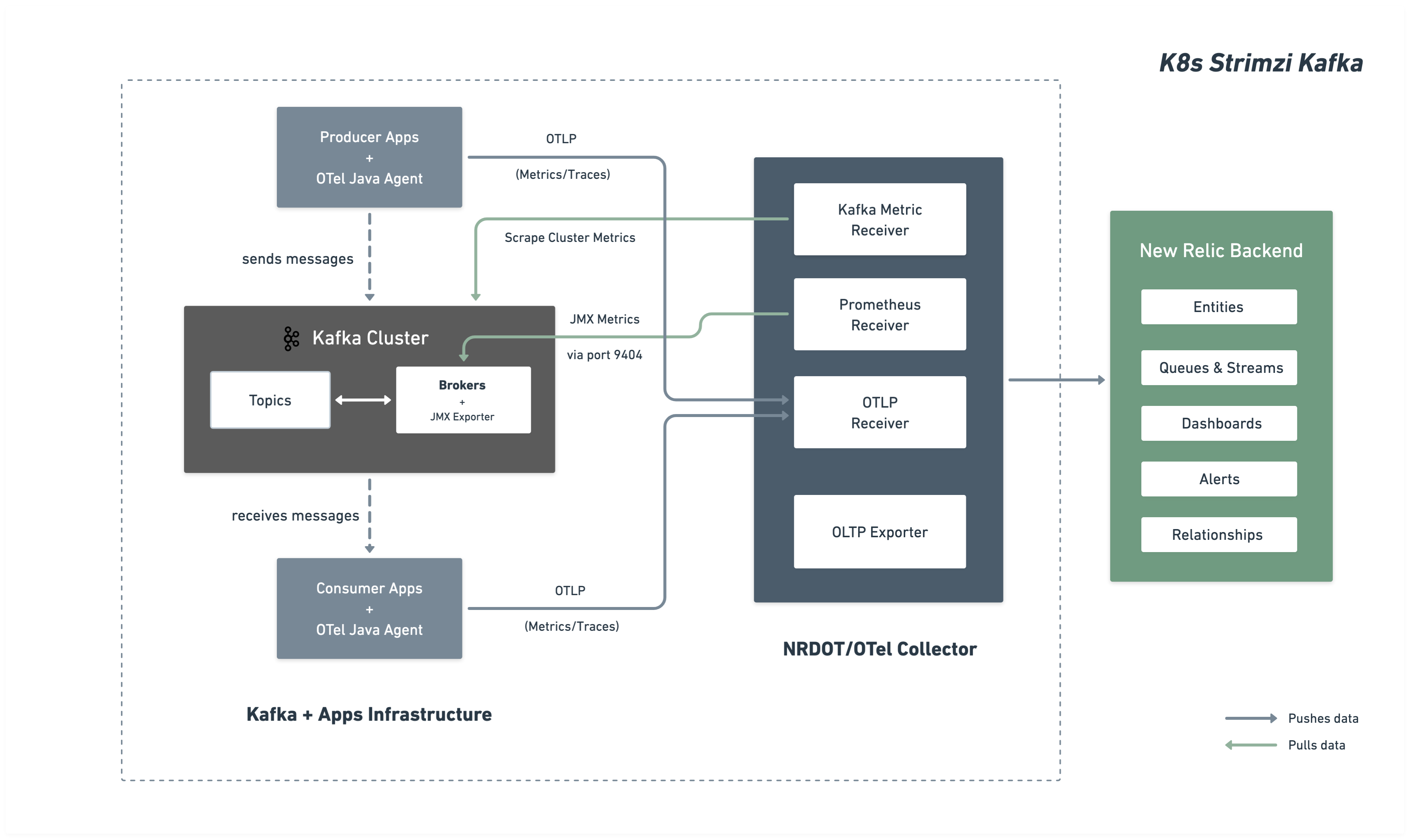

O diagrama a seguir ilustra a arquitetura de monitoramento e o fluxo de dados para o New Relic.

Etapas de instalação

Siga estas etapas para configurar o monitoramento do seu cluster Kafka:

Antes de você começar

Certifique-se de ter:

- Uma conta New Relic com uma

- Cluster Kubernetes com acesso kubectl

- Kafka implantado via operador Strimzi

Configurar o cluster Kafka para métricas JMX do Kafka

Configure seu cluster Strimzi Kafka para expor métricas JMX do Kafka via Prometheus JMX Exporter. Esta configuração será implantada como um ConfigMap e referenciada pelo seu cluster Kafka.

Criar ConfigMap de métricas JMX

Crie um ConfigMap com padrões do JMX Exporter que definem quais métricas do Kafka coletar. Salvar como kafka-jmx-metrics-config.yaml:

apiVersion: v1kind: ConfigMapmetadata: name: kafka-jmx-metrics namespace: newrelicdata: kafka-metrics-config.yml: | startDelaySeconds: 0 lowercaseOutputName: true lowercaseOutputLabelNames: true

rules: # Cluster-level controller metrics - pattern: 'kafka.controller<type=KafkaController, name=GlobalTopicCount><>Value' name: kafka_cluster_topic_count type: GAUGE

- pattern: 'kafka.controller<type=KafkaController, name=GlobalPartitionCount><>Value' name: kafka_cluster_partition_count type: GAUGE

- pattern: 'kafka.controller<type=KafkaController, name=FencedBrokerCount><>Value' name: kafka_broker_fenced_count type: GAUGE

- pattern: 'kafka.controller<type=KafkaController, name=PreferredReplicaImbalanceCount><>Value' name: kafka_partition_non_preferred_leader type: GAUGE

- pattern: 'kafka.controller<type=KafkaController, name=OfflinePartitionsCount><>Value' name: kafka_partition_offline type: GAUGE

- pattern: 'kafka.controller<type=KafkaController, name=ActiveControllerCount><>Value' name: kafka_controller_active_count type: GAUGE

# Broker-level replica metrics - pattern: 'kafka.server<type=ReplicaManager, name=UnderMinIsrPartitionCount><>Value' name: kafka_partition_under_min_isr type: GAUGE

- pattern: 'kafka.server<type=ReplicaManager, name=LeaderCount><>Value' name: kafka_broker_leader_count type: GAUGE

- pattern: 'kafka.server<type=ReplicaManager, name=PartitionCount><>Value' name: kafka_partition_count type: GAUGE

- pattern: 'kafka.server<type=ReplicaManager, name=UnderReplicatedPartitions><>Value' name: kafka_partition_under_replicated type: GAUGE

- pattern: 'kafka.server<type=ReplicaManager, name=IsrShrinksPerSec><>Count' name: kafka_isr_operation_count type: COUNTER labels: operation: "shrink"

- pattern: 'kafka.server<type=ReplicaManager, name=IsrExpandsPerSec><>Count' name: kafka_isr_operation_count type: COUNTER labels: operation: "expand"

- pattern: 'kafka.server<type=ReplicaFetcherManager, name=MaxLag, clientId=Replica><>Value' name: kafka_max_lag type: GAUGE

# Broker topic metrics (totals) - pattern: 'kafka.server<type=BrokerTopicMetrics, name=MessagesInPerSec><>Count' name: kafka_message_count type: COUNTER

- pattern: 'kafka.server<type=BrokerTopicMetrics, name=TotalFetchRequestsPerSec><>Count' name: kafka_request_count type: COUNTER labels: type: "fetch"

- pattern: 'kafka.server<type=BrokerTopicMetrics, name=TotalProduceRequestsPerSec><>Count' name: kafka_request_count type: COUNTER labels: type: "produce"

- pattern: 'kafka.server<type=BrokerTopicMetrics, name=FailedFetchRequestsPerSec><>Count' name: kafka_request_failed type: COUNTER labels: type: "fetch"

- pattern: 'kafka.server<type=BrokerTopicMetrics, name=FailedProduceRequestsPerSec><>Count' name: kafka_request_failed type: COUNTER labels: type: "produce"

- pattern: 'kafka.server<type=BrokerTopicMetrics, name=BytesInPerSec><>Count' name: kafka_network_io type: COUNTER labels: direction: "in"

- pattern: 'kafka.server<type=BrokerTopicMetrics, name=BytesOutPerSec><>Count' name: kafka_network_io type: COUNTER labels: direction: "out"

# Per-topic metrics (only appear after traffic flows) - pattern: 'kafka.server<type=BrokerTopicMetrics, name=MessagesInPerSec, topic=(.+)><>Count' name: kafka_prod_msg_count type: COUNTER labels: topic: "$1"

- pattern: 'kafka.server<type=BrokerTopicMetrics, name=BytesInPerSec, topic=(.+)><>Count' name: kafka_topic_io type: COUNTER labels: topic: "$1" direction: "in"

- pattern: 'kafka.server<type=BrokerTopicMetrics, name=BytesOutPerSec, topic=(.+)><>Count' name: kafka_topic_io type: COUNTER labels: topic: "$1" direction: "out"

# Request metrics - pattern: 'kafka.network<type=RequestMetrics, name=TotalTimeMs, request=(Produce|FetchConsumer|FetchFollower)><>99thPercentile' name: kafka_request_time_99p type: GAUGE labels: type: "$1"

- pattern: 'kafka.network<type=RequestChannel, name=RequestQueueSize><>Value' name: kafka_request_queue type: GAUGE

- pattern: 'kafka.server<type=DelayedOperationPurgatory, name=PurgatorySize, delayedOperation=(.+)><>Value' name: kafka_purgatory_size type: GAUGE labels: type: "$1"

# Controller stats - pattern: 'kafka.controller<type=ControllerStats, name=LeaderElectionRateAndTimeMs><>Count' name: kafka_leader_election_rate type: COUNTER

- pattern: 'kafka.controller<type=ControllerStats, name=UncleanLeaderElectionsPerSec><>Count' name: kafka_unclean_election_rate type: COUNTER

# JVM Garbage Collection - pattern: 'java.lang<name=(.+), type=GarbageCollector><>CollectionCount' name: jvm_gc_collections_count type: COUNTER labels: name: "$1"

# JVM Memory - pattern: 'java.lang<type=Memory><HeapMemoryUsage>max' name: jvm_memory_heap_max type: GAUGE

- pattern: 'java.lang<type=Memory><HeapMemoryUsage>used' name: jvm_memory_heap_used type: GAUGE

# JVM Threading and System - pattern: 'java.lang<type=Threading><>ThreadCount' name: jvm_thread_count type: GAUGE

- pattern: 'java.lang<type=OperatingSystem><>SystemCpuLoad' name: jvm_system_cpu_utilization type: GAUGE

# Broker uptime - pattern: 'java.lang<type=Runtime><>Uptime' name: kafka_broker_uptime type: GAUGE

# Additional metrics — remove this section to reduce data ingest

# Request latency: total count, 50th percentile, and average (99p kept above) - pattern: 'kafka.network<type=RequestMetrics, name=TotalTimeMs, request=(Produce|FetchConsumer|FetchFollower)><>Count' name: kafka_request_time_total type: COUNTER labels: type: "$1"

- pattern: 'kafka.network<type=RequestMetrics, name=TotalTimeMs, request=(Produce|FetchConsumer|FetchFollower)><>50thPercentile' name: kafka_request_time_50p type: GAUGE labels: type: "$1"

- pattern: 'kafka.network<type=RequestMetrics, name=TotalTimeMs, request=(Produce|FetchConsumer|FetchFollower)><>Mean' name: kafka_request_time_avg type: GAUGE labels: type: "$1"

# Log flush metrics - pattern: 'kafka.log<type=LogFlushStats, name=LogFlushRateAndTimeMs><>Count' name: kafka_logs_flush_count type: COUNTER

- pattern: 'kafka.log<type=LogFlushStats, name=LogFlushRateAndTimeMs><>50thPercentile' name: kafka_logs_flush_time_50p type: GAUGE

- pattern: 'kafka.log<type=LogFlushStats, name=LogFlushRateAndTimeMs><>99thPercentile' name: kafka_logs_flush_time_99p type: GAUGE

# JVM GC elapsed time - pattern: 'java.lang<name=(.+), type=GarbageCollector><>CollectionTime' name: jvm_gc_collections_elapsed type: COUNTER labels: name: "$1"

# JVM Memory heap committed - pattern: 'java.lang<type=Memory><HeapMemoryUsage>committed' name: jvm_memory_heap_committed type: GAUGE

# JVM class loading - pattern: 'java.lang<type=ClassLoading><>LoadedClassCount' name: jvm_class_count type: GAUGE

# Additional JVM OS metrics - pattern: 'java.lang<type=OperatingSystem><>SystemLoadAverage' name: jvm_system_cpu_load_1m type: GAUGE

- pattern: 'java.lang<type=OperatingSystem><>AvailableProcessors' name: jvm_cpu_count type: GAUGE

- pattern: 'java.lang<type=OperatingSystem><>ProcessCpuLoad' name: jvm_cpu_recent_utilization type: GAUGE

- pattern: 'java.lang<type=OperatingSystem><>OpenFileDescriptorCount' name: jvm_file_descriptor_count type: GAUGE

# JVM Memory Pool - pattern: 'java.lang<type=MemoryPool, name=(.+)><Usage>used' name: jvm_memory_pool_used type: GAUGE labels: name: "$1"

- pattern: 'java.lang<type=MemoryPool, name=(.+)><Usage>max' name: jvm_memory_pool_max type: GAUGE labels: name: "$1"

- pattern: 'java.lang<type=MemoryPool, name=(.+)><CollectionUsage>used' name: jvm_memory_pool_used_after_last_gc type: GAUGE labels: name: "$1"Dica

Personalize métricas: Este ConfigMap inclui métricas abrangentes de broker, tópico, requisição, controlador e JVM do Kafka. Você pode adicionar ou modificar padrões consultando os exemplos do Prometheus JMX Exporter e a documentação do Kafka MBean. Consulte a documentação de regras do JMX Exporter para configurações adicionais.

Importante

Requisito de namespace: O ConfigMap de métricas JMX e o seu cluster Kafka devem estar no mesmo namespace. Neste guia, ambos são implantados no namespace newrelic.

Aplique o ConfigMap:

$kubectl apply -f kafka-jmx-metrics-config.yamlAtualize o cluster Kafka para usar o JMX Exporter

Atualize seu recurso Strimzi Kafka para referenciar o ConfigMap de métricas:

apiVersion: kafka.strimzi.io/v1beta2kind: Kafkametadata: name: my-cluster namespace: newrelicspec: kafka: version: X.X.X metricsConfig: type: jmxPrometheusExporter valueFrom: configMapKeyRef: name: kafka-jmx-metrics key: kafka-metrics-config.yml # ...rest of your Kafka configurationAplique as alterações. O Strimzi executará uma reinicialização contínua dos seus brokers Kafka:

$kubectl apply -f kafka-cluster.yamlApós a conclusão da reinicialização gradual, cada broker do Kafka exporá métricas do Prometheus na porta 9404.

Implantar o OpenTelemetry Collector

Implante o OpenTelemetry Collector para monitorar seu cluster Kafka. Escolha seu método de instalação preferido:

O método de instalação via Helm é a abordagem recomendada para implantar o OpenTelemetry Collector no Kubernetes.

Criar segredo de credenciais da New Relic

Crie um segredo do Kubernetes contendo sua chave de licença da New Relic e o endpoint OTLP. Escolha o endpoint para sua região New Relic:

Dica

Para outras configurações de endpoint, consulte Configure seu endpoint OTLP.

Crie o values.yaml com a configuração do coletor

Crie um arquivo values.yaml que contenha a configuração completa do OpenTelemetry Collector. Tanto o NRDOT quanto os coletores OpenTelemetry usam configuração idêntica e fornecem os mesmos recursos de monitoramento do Kafka. Escolha sua imagem de coletor preferida:

Para opções de configuração avançadas, consulte estas páginas de documentação do receptor:

Documentação do receiver Prometheus - Opções adicionais de configuração do receiver

Documentação do receptor de métricas do Kafka - Configuração de métricas do Kafka adicional

Instale o OpenTelemetry Collector com o Helm

Adicione o repositório Helm e instale o OpenTelemetry Collector usando o arquivo values.yaml:

bash$helm repo add open-telemetry https://open-telemetry.github.io/opentelemetry-helm-charts$helm upgrade kafka-monitoring open-telemetry/opentelemetry-collector \>--install \>--namespace newrelic \>--create-namespace \>-f values.yamlVerifique a implantação:

bash$# Check pod status$kubectl get pods -n newrelic -l app.kubernetes.io/name=opentelemetry-collector$$# View logs to verify metrics collection$kubectl logs -n newrelic -l app.kubernetes.io/name=opentelemetry-collector --tail=50Você deve ver logs indicando uma coleta bem-sucedida dos brokers Kafka na porta 9404.

O método de instalação via manifesto oferece controle direto sobre os recursos do Kubernetes sem usar o Helm.

Criar segredo de credenciais da New Relic

Crie um segredo do Kubernetes contendo sua chave de licença da New Relic e o endpoint OTLP. Escolha o endpoint para sua região New Relic:

Dica

Para outras configurações de endpoint, consulte Configure seu endpoint OTLP.

Criar arquivos de manifesto

Crie os arquivos de manifesto do Kubernetes para o coletor de sua preferência. Ambos os coletores usam configuração idêntica - apenas a imagem difere.

Escolha sua opção de coletor e crie os três arquivos necessários:

Para opções de configuração avançadas, consulte estas páginas de documentação do receptor:

Documentação do receiver Prometheus - Opções adicionais de configuração do receiver

Documentação do receptor de métricas do Kafka - Configuração de métricas do Kafka adicional

Implante os manifestos

Aplique os manifestos do Kubernetes para implantar o OpenTelemetry Collector:

bash$# Create namespace if it doesn't exist$kubectl create namespace newrelic --dry-run=client -o yaml | kubectl apply -f -$$# Apply RBAC configuration$kubectl apply -f collector-rbac.yaml$$# Apply ConfigMap$kubectl apply -f collector-configmap.yaml$$# Apply Deployment$kubectl apply -f collector-deployment.yamlVerifique a implantação:

bash$# Check pod status$kubectl get pods -n newrelic -l app=otel-collector$$# View logs to verify metrics collection$kubectl logs -n newrelic -l app=otel-collector --tail=50Você deve ver logs indicando uma coleta bem-sucedida dos brokers Kafka na porta 9404.

(Opcional) Instrumente aplicações produtoras ou consumidoras

Importante

Suporte a linguagens: Aplicações Java suportam instrumentação de cliente Kafka pronta para uso utilizando o OpenTelemetry Java Agent.

Para coletar telemetria no nível da aplicação de suas aplicações produtoras e consumidoras do Kafka, use o OpenTelemetry Java Agent.

Instrumente seu aplicativo Kafka

Use um init container para baixar o OpenTelemetry Java Agent em tempo de execução:

apiVersion: apps/v1kind: Deploymentmetadata: name: kafka-producer-appspec: template: spec: initContainers: - name: download-java-agent image: busybox:latest command: - sh - -c - | wget -O /otel-auto-instrumentation/opentelemetry-javaagent.jar \ https://github.com/open-telemetry/opentelemetry-java-instrumentation/releases/latest/download/opentelemetry-javaagent.jar volumeMounts: - name: otel-auto-instrumentation mountPath: /otel-auto-instrumentation

containers: - name: app image: your-kafka-app:latest env: - name: JAVA_TOOL_OPTIONS value: >- -javaagent:/otel-auto-instrumentation/opentelemetry-javaagent.jar -Dotel.service.name=order-process-service -Dotel.resource.attributes=kafka.cluster.name=my-cluster -Dotel.exporter.otlp.endpoint=http://localhost:4317 -Dotel.exporter.otlp.protocol=grpc -Dotel.metrics.exporter=otlp -Dotel.traces.exporter=otlp -Dotel.logs.exporter=otlp -Dotel.instrumentation.kafka.experimental-span-attributes=true -Dotel.instrumentation.messaging.experimental.receive-telemetry.enabled=true -Dotel.instrumentation.kafka.producer-propagation.enabled=true -Dotel.instrumentation.kafka.enabled=true volumeMounts: - name: otel-auto-instrumentation mountPath: /otel-auto-instrumentation

volumes: - name: otel-auto-instrumentation emptyDir: {}Observações de configuração:

- Substitua

order-process-servicepor um nome exclusivo para sua aplicação produtora ou consumidora - Substitua

my-clusterpelo mesmo nome do cluster usado na configuração do seu coletor - O endpoint

http://localhost:4317pressupõe que o coletor esteja em execução como um sidecar no mesmo pod ou acessível via localhost

Dica

A configuração acima envia telemetria para um OpenTelemetry Collector. Se você precisar enviar telemetria para o coletor, implante-o conforme descrito na Etapa 3 com esta configuração:

O Java Agent fornece instrumentação Kafka pronta para uso com zero alterações de código, capturando:

- Latências de solicitação

- Métricas de throughput

- Taxas de erro

- Rastreamento distribuído

Para configuração avançada, consulte a documentação de instrumentação do Kafka.

Encontre seus dados

Após alguns minutos, suas métricas Kafka devem aparecer no New Relic. Consulte Encontre seus dados para obter instruções detalhadas sobre como explorar suas métricas Kafka em diferentes visualizações na interface do usuário do New Relic.

Você também pode consultar seus dados com NRQL:

FROM Metric SELECT * WHERE kafka.cluster.name = 'my-kafka-cluster'Resolução de problemas

Próximos passos

- Explore as métricas do Kafka - Visualize a referência completa de métricas

- Criar dashboards personalizados - Crie visualizações para seus dados do Kafka

- Configurar alertas - Monitore métricas críticas como lag do consumidor e partições sub-replicadas